k8s集群部署elk

一、前言

本次部署elk所有的服务都部署在k8s集群中,服务包含filebeat、logstash、elasticsearch、kibana,其中elasticsearch使用集群的方式部署,所有服务都是用7.17.10版本

二、部署

部署elasticsearch集群

部署elasticsearch集群需要先优化宿主机(所有k8s节点都要优化,不优化会部署失败)

vi /etc/sysctl.conf

vm.max_map_count=262144

重载生效配置

sysctl -p

以下操作在k8s集群的任意master执行即可

创建yaml文件存放目录

mkdir /opt/elk && cd /opt/elk

这里使用无头服务部署es集群,需要用到pv存储es集群数据,service服务提供访问,setafuset服务部署es集群

创建svc的无头服务和对外访问的yaml配置文件

vi es-service.yaml

kind: Service

metadata:

name: elasticsearch

namespace: elk

labels:

app: elasticsearch

spec:

selector:

app: elasticsearch

clusterIP: None

ports:

- port: 9200

name: db

- port: 9300

name: inter

vi es-service-nodeport.yaml

apiVersion: v1

kind: Service

metadata:

name: elasticsearch-nodeport

namespace: elk

labels:

app: elasticsearch

spec:

selector:

app: elasticsearch

type: NodePort

ports:

- port: 9200

name: db

nodePort: 30017

- port: 9300

name: inter

nodePort: 30018

创建pv的yaml配置文件(这里使用nfs共享存储方式)

vi es-pv.yaml

apiVersion: v1

kind: PersistentVolume

metadata:

name: es-pv1

spec:

storageClassName: es-pv #定义了存储类型

capacity:

storage: 30Gi

accessModes:

- ReadWriteMany

persistentVolumeReclaimPolicy: Retain

nfs:

path: /volume2/k8s-data/es/es-pv1

server: 10.1.13.99

---

apiVersion: v1

kind: PersistentVolume

metadata:

name: es-pv2

spec:

storageClassName: es-pv #定义了存储类型

capacity:

storage: 30Gi

accessModes:

- ReadWriteMany

persistentVolumeReclaimPolicy: Retain

nfs:

path: /volume2/k8s-data/es/es-pv2

server: 10.1.13.99

---

apiVersion: v1

kind: PersistentVolume

metadata:

name: es-pv3

spec:

storageClassName: es-pv #定义了存储类型

capacity:

storage: 30Gi

accessModes:

- ReadWriteMany

persistentVolumeReclaimPolicy: Retain

nfs:

path: /volume2/k8s-data/es/es-pv3

server: 10.1.13.99

创建setafulset的yaml配置文件

vi es-setafulset.yaml

apiVersion: apps/v1

kind: StatefulSet

metadata:

name: elasticsearch

namespace: elk

labels:

app: elasticsearch

spec:

podManagementPolicy: Parallel

serviceName: elasticsearch

replicas: 3

selector:

matchLabels:

app: elasticsearch

template:

metadata:

labels:

app: elasticsearch

spec:

tolerations: #此配置是容忍污点可以使pod部署到master节点,可以去掉

- key: "node-role.kubernetes.io/control-plane"

operator: "Exists"

effect: NoSchedule

containers:

- image: elasticsearch:7.17.10

name: elasticsearch

resources:

limits:

cpu: 1

memory: 2Gi

requests:

cpu: 0.5

memory: 500Mi

env:

- name: network.host

value: "_site_"

- name: node.name

value: "${HOSTNAME}"

- name: discovery.zen.minimum_master_nodes

value: "2"

- name: discovery.seed_hosts #该参数用于告诉新加入集群的节点去哪里发现其他节点,它应该包含集群中已经在运行的一部分节点的主机名或IP地址,这里我使用无头服务的地址

value: "elasticsearch-0.elasticsearch.elk.svc.cluster.local,elasticsearch-1.elasticsearch.elk.svc.cluster.local,elasticsearch-2.elasticsearch.elk.svc.cluster.local"

- name: cluster.initial_master_nodes #这个参数用于指定初始主节点。当一个新的集群启动时,它会从这个列表中选择一个节点作为初始主节点,然后根据集群的情况选举其他的主节点

value: "elasticsearch-0,elasticsearch-1,elasticsearch-2"

- name: cluster.name

value: "es-cluster"

- name: ES_JAVA_OPTS

value: "-Xms512m -Xmx512m"

ports:

- containerPort: 9200

name: db

protocol: TCP

- name: inter

containerPort: 9300

volumeMounts:

- name: elasticsearch-data

mountPath: /usr/share/elasticsearch/data

volumeClaimTemplates:

- metadata:

name: elasticsearch-data

spec:

storageClassName: "es-pv"

accessModes: [ "ReadWriteMany" ]

resources:

requests:

storage: 30Gi

创建elk服务的命名空间

kubectl create namespace elk

创建yaml文件的服务

kubectl create -f es-pv.yaml kubectl create -f es-service-nodeport.yaml kubectl create -f es-service.yaml kubectl create -f es-setafulset.yaml

查看es服务是否正常启动

kubectl get pod -n elk

检查elasticsearch集群是否正常

http://10.1.60.119:30017/_cluster/state/master_node,nodes?pretty

可以看到集群中能正确识别到三个es节点

elasticsearch集群部署完成

部署kibana服务

这里使用deployment控制器部署kibana服务,使用service服务对外提供访问

创建deployment的yaml配置文件

vi kibana-deployment.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

name: kibana

namespace: elk

labels:

app: kibana

spec:

replicas: 1

selector:

matchLabels:

app: kibana

template:

metadata:

labels:

app: kibana

spec:

tolerations:

- key: "node-role.kubernetes.io/control-plane"

operator: "Exists"

effect: NoSchedule

containers:

- name: kibana

image: kibana:7.17.10

resources:

limits:

cpu: 1

memory: 1G

requests:

cpu: 0.5

memory: 500Mi

env:

- name: ELASTICSEARCH_HOSTS

value: http://elasticsearch:9200

ports:

- containerPort: 5601

protocol: TCP

创建service的yaml配置文件

vi kibana-service.yaml

apiVersion: v1

kind: Service

metadata:

name: kibana

namespace: elk

spec:

ports:

- port: 5601

protocol: TCP

targetPort: 5601

nodePort: 30019

type: NodePort

selector:

app: kibana

创建yaml文件的服务

kubectl create -f kibana-service.yaml kubectl create -f kibana-deployment.yaml

查看kibana是否正常

kubectl get pod -n elk

部署logstash服务

logstash服务也是通过deployment控制器部署,需要使用到configmap存储logstash配置,还有service提供对外访问服务

编辑configmap的yaml配置文件

vi logstash-configmap.yaml

apiVersion: v1

kind: ConfigMap

metadata:

name: logstash-configmap

namespace: elk

labels:

app: logstash

data:

logstash.conf: |

input {

beats {

port => 5044 #设置日志收集端口

# codec => "json"

}

}

filter {

}

output {

# stdout{ 该被注释的配置项用于将收集的日志输出到logstash的日志中,主要用于测试看收集的日志中包含哪些内容

# codec => rubydebug

# }

elasticsearch {

hosts => "elasticsearch:9200"

index => "nginx-%{+YYYY.MM.dd}"

}

}

编辑deployment的yaml配置文件

vi logstash-deployment.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

name: logstash

namespace: elk

spec:

replicas: 1

selector:

matchLabels:

app: logstash

template:

metadata:

labels:

app: logstash

spec:

containers:

- name: logstash

image: logstash:7.17.10

imagePullPolicy: IfNotPresent

ports:

- containerPort: 5044

volumeMounts:

- name: config-volume

mountPath: /usr/share/logstash/pipeline/

volumes:

- name: config-volume

configMap:

name: logstash-configmap

items:

- key: logstash.conf

path: logstash.conf

编辑service的yaml配置文件(我这里是收集k8s内部署的服务日志,所以没开放对外访问)

vi logstash-service.yaml

apiVersion: v1

kind: Service

metadata:

name: logstash

namespace: elk

spec:

ports:

- port: 5044

targetPort: 5044

protocol: TCP

selector:

app: logstash

type: ClusterIP

创建yaml文件的服务

kubectl create -f logstash-configmap.yaml kubectl create -f logstash-service.yaml kubectl create -f logstash-deployment.yaml

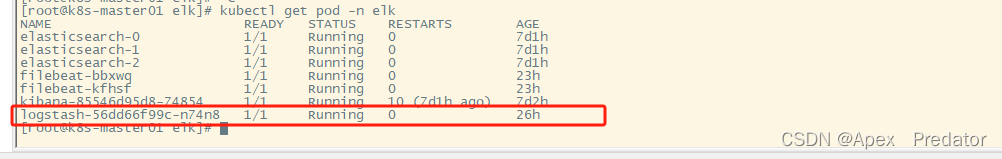

查看logstash服务是否正常启动

kubectl get pod -n elk

部署filebeat服务

filebeat服务使用daemonset方式部署到k8s的所有工作节点上,用于收集容器日志,也需要使用configmap存储配置文件,还需要配置rbac赋权,因为用到了filebeat的自动收集模块,自动收集k8s集群的日志,需要对k8s集群进行访问,所以需要赋权

编辑rabc的yaml配置文件

vi filebeat-rbac.yaml

apiVersion: v1

kind: ServiceAccount

metadata:

name: filebeat

namespace: elk

labels:

app: filebeat

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRole

metadata:

name: filebeat

labels:

app: filebeat

rules:

- apiGroups: [""]

resources: ["namespaces", "pods", "nodes"] #赋权可以访问的服务

verbs: ["get", "list", "watch"] #可以使用以下命令

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: filebeat

subjects:

- kind: ServiceAccount

name: filebeat

namespace: elk

roleRef:

kind: ClusterRole

name: filebeat

apiGroup: rbac.authorization.k8s.io

编辑configmap的yaml配置文件

vi filebeat-configmap.yaml

apiVersion: v1

kind: ConfigMap

metadata:

name: filebeat-config

namespace: elk

data:

filebeat.yml: |

filebeat.autodiscover: #使用filebeat的自动发现模块

providers:

- type: kubernetes #类型选择k8s类型

templates: #配置需要收集的模板

- condition:

and:

- or:

- equals:

kubernetes.labels: #通过标签筛选需要收集的pod日志

app: foundation

- equals:

kubernetes.labels:

app: api-gateway

- equals: #通过命名空间筛选需要收集的pod日志

kubernetes.namespace: java-service

config: #配置日志路径,使用k8s的日志路径

- type: container

symlinks: true

paths: #配置路径时,需要使用变量去构建路径,以此来达到收集对应服务的日志

- /var/log/containers/${data.kubernetes.pod.name}_${data.kubernetes.namespace}_${data.kubernetes.container.name}-*.log

output.logstash:

hosts: ['logstash:5044']

关于filebeat自动发现k8s服务的更多内容可以参考elk官网,里面还有很多的k8s参数可用

参考:Autodiscover | Filebeat Reference [8.12] | Elastic

编辑daemonset的yaml配置文件

vi filebeat-daemonset.yaml

apiVersion: apps/v1

kind: DaemonSet

metadata:

name: filebeat

namespace: elk

labels:

app: filebeat

spec:

selector:

matchLabels:

app: filebeat

template:

metadata:

labels:

app: filebeat

spec:

serviceAccountName: filebeat

terminationGracePeriodSeconds: 30

containers:

- name: filebeat

image: elastic/filebeat:7.17.10

args: [

"-c", "/etc/filebeat.yml",

"-e",

]

env:

- name: NODE_NAME

valueFrom:

fieldRef:

fieldPath: spec.nodeName

securityContext:

runAsUser: 0

resources:

limits:

cpu: 200m

memory: 200Mi

requests:

cpu: 100m

memory: 100Mi

volumeMounts:

- name: config

mountPath: /etc/filebeat.yml

readOnly: true

subPath: filebeat.yml

- name: log #这里挂载了三个日志路径,这是因为k8s的container路径下的日志文件都是通过软链接去链接其它目录的文件

mountPath: /var/log/containers

readOnly: true

- name: pod-log #这里是container下的日志软链接的路径,然而这个还不是真实路径,这也是个软链接

mountPath: /var/log/pods

readOnly: true

- name: containers-log #最后这里才是真实的日志路径,如果不都挂载进来是取不到日志文件的内容的

mountPath: /var/lib/docker/containers

readOnly: true

volumes:

- name: config

configMap:

defaultMode: 0600

name: filebeat-config

- name: log

hostPath:

path: /var/log/containers

- name: pod-log

hostPath:

path: /var/log/pods

- name: containers-log

hostPath:

path: /var/lib/docker/containers

创建yaml文件的服务

kubectl create -f filebeat-rbac.yaml kubectl create -f filebeat-configmap.yaml kubectl create -f filebeat-daemonset.yaml

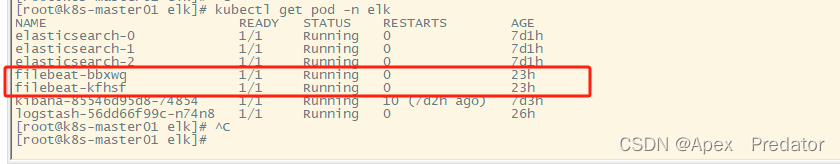

查看filebeat服务是否正常启动

kubectl get pod -n elk

至此在k8s集群内部署elk服务完成

.png)